Introduction

In 2016 the European Union created the General Data Protection Regulation (GDPR), and a few months from now, on May 25, 2018 to be precise, it will go into full effect. This regulation has sweeping implications for any EU business that deals with personal data and for any business outside of the EU that stores or processes data of EU citizens. Did you suddenly get emails from webapps that are going to delete your account after years of non-use? That’s right, that’s GDPR at work.

As a cloud intranet software provider the GDPR regulation affects everything we do, so we knew we had to start our preparations for compliance way in advance of the May 2018 deadline. In the past year we’ve been very busy and in this document we describe the measures and precautions we’ve had to take, and we reflect on some of the lesson we’ve learned.

We’ve created this document in the hope that it is of value to others that are grappling with the implications of GDPR. We found that it is easy to overlook complete areas that are affected by GDPR. And we got it relatively easy: we are a 100% digital cloud-based company. We don’t have filing cabinets filled with documents, or old computers stuck in dusty closets. The only thing we had to worry about is the software we wrote ourselves. And yet, becoming GDPR compliant was still a ton of work. So if we had to go through all this trouble as a small company with only a couple of products, we cannot imagine how big the impact of GDPR will be for larger companies that have many employees and products that go back decades. Suffice to say, GDPR is no joke.

Unlike most of our blogposts, this one is going to be a little more technical. With that said, let’s dive in!

Data Storage

The first and most critical thing to figure out is what kinds of data we store, and in which systems. We’ve been in business for 10 years which means that we have accumulated a lot of cruft. Old services, like backup systems, that have been replaced by newer services, old database tables, old diagnostics data, and so on. So first we had to make an exhaustive list of all places where data is stored, and then we had to figure out an appropriate GDPR strategy for each one. Following the YAGNI mantra, deletion was our solution of choice.

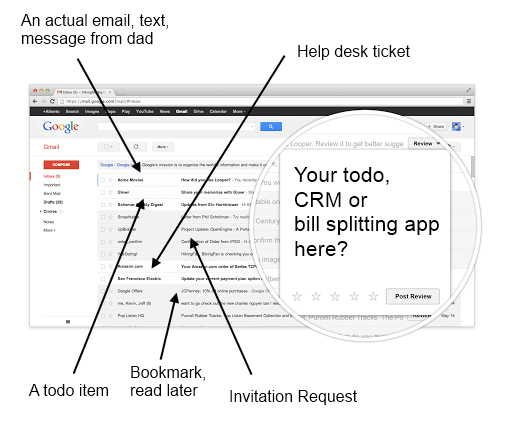

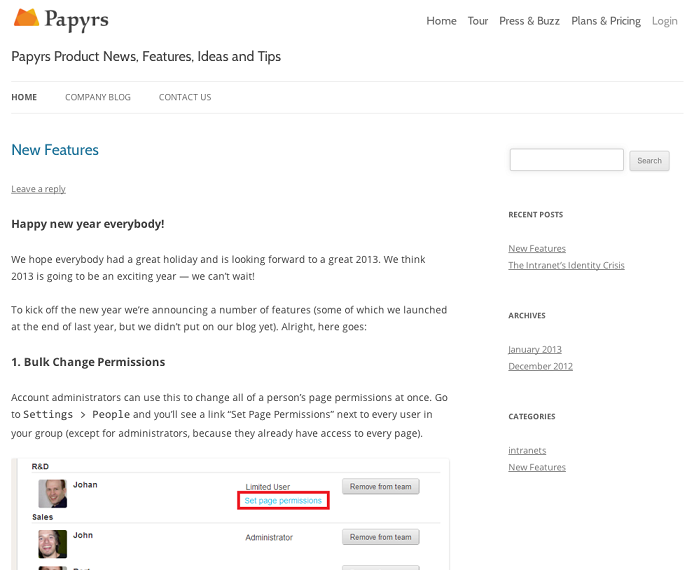

Our primary product is a drag&drop intranet SaaS webapp called Papyrs. Users can create pages, add file attachments, image galleries, comments, forms, and so on. Because we respect the privacy of our customers we don’t know if any of the data stored on our service by our customers is sensitive data protected by GDPR. Consequently we take the most conservative assumption: everything stored by our customers is critically confidential data, or PII (personally identifiable information) in GDPR parlance. This means that when a customer closes their account all their data has to be permanently incinerated.

That’s easier said than done. Papyrs has several types of data. We’ll briefly cover each type.

File uploads

We don’t use a storage service like Amazon S3 so we don’t have to worry about an orphaned bucket floating around somewhere. All files customers upload to Papyrs end up in the customer’s directory on our own CDN (mirrored using rsync). The metadata on each file (e.g. permissions, statistics, ownership) are stored in the database: every file has a corresponding database record. The database is transactional, but the filesystem is not. When an upload fails the database transaction is rolled back, but a partially uploaded or broken file may remain. In an ideal world the database and the filesystem would guaranteed to be in sync, but we have to make do with the abstractions we have. Partial uploads still get deleted eventually and it cannot lead to errors visible to the end user, but we still have to be mindful of the subtleties of dealing with the filesystem.

Papyrs dynamically resizes images as needed (to improve load times and to save bandwidth for mobile devices), which means that for every image uploaded to Papyrs there can be many cached thumbnails. The story here is similar to that of regular file uploads, except that we have to be extra careful to make sure all the right thumbnails get deleted.

The lesson here is to make sure you keep track of stray files, and that you don’t delete a row from a database until you’re absolutely sure all the corresponding files on the filesystem are gone. Otherwise it may too late to identify which files have to be deleted!

Fishermen don’t have to worry about GDPR

Fishermen don’t have to worry about GDPR

Log files

All systems that make up the Papyrs service keep log files that contain information about system health, performance, errors and warnings, and other diagnostic information. Although typically these log files contain nothing even remotely sensitive or confidential, some information in log files (like IP addresses) may be considered PII under GDPR. Figuring out exactly which lines in which log files relate to which user on Papyrs is difficult and exactly the kind of privacy-invading work we don’t want to to. So instead we fall back on our pessimistic strategy: we treat all data, except for the data we know for sure is innocuous, as confidential. Our solution: automatically delete (rotate) all log files older than a few weeks. In practice we rarely needed diagnostic information older than that, anyway.

Here the Python RotatingFileHandler and TimedRotatingFileHandler modules are a great solution. Of course we also rely on Debian’s logrotate whenever possible.

But what to do with services that don’t listen to the SIGHUP signal sent by logrotate, and that don’t have any in-built way to rotate their own log files? Well, there is an easy trick:

$ tail -n 1000 logfile.log | sponge logfile.log

This truncates the file and puts the last 1000 lines back into the logfile. You can’t beat the simplicity, but if you want to cull the beginning of your log files this way you have to be mindful of one thing: while most logging systems with multiple writers use a mutex to synchronize writing to the same file, this bash hack doesn’t. So you may end up with a malformed line or two in your log file as two processes write to the same logfile simultaneously. If that’s unacceptable, you can instead create your own lazy log rotation by sticking this in a cron file:

$ cp logfile.log > logfile.log.1 && echo “” > logfile.log

The copy here is deliberate: you can’t move the file. This is because the services that are writing to the logfile will just keep writing to their open file handle, blithely unaware that the file has been moved, renamed, or even deleted. The logrotate option COPYTRUNCATE works like this too.

Cache files and other temporary data

Memcached, message queues, and other systems for transient storage can easily end up containing confidential data. Luckily, we didn’t have to do anything here. Everything we store in memcached expires quickly, so we don’t have to worry about stale data residing in an in-memory store. We made that decision a long time ago for performance reasons. Too many web applications end up relying on memcached for performance, so much so that when memcached resets the database and workers can no longer keep up with all the traffic. We didn’t want to worry about that, so our memcached data can be purged at any time. Everything in our message queue has a deterministic lifetime as well.

This is one of those cases where good architectural design choices end up paying off in unexpected ways! Always a nice surprise.

Full Text Search

We use Sphinx for full text search. Sphinx is terrific. Performance is great and the quality and ranking of the search results is superior. One problem is that we have one Sphinx database for all our user data, and a full text search database like Sphinx isn’t designed for quickly deleting a range of records. Re-creating the entire full text index isn’t an option, as that would take too long.

The only updates sphinx knows is “merge & swap”. A main index and a delta index are merged together into a new index and then the new index is swapped with the old one. The delta index can contain delete indicators. Then during the merge phase those rows with delete indicators get dropped from the main index.

Relational Database

Deleting user data from a database is either trivial or practically impossible, depending on what it means to delete. Files on a filesystem can be securely deleted if needed. Or you can simply create an encrypted directory and throw away the key to get rid of the data securely. But when rows are deleted from a database like postgres or mysql the data isn’t guaranteed to be gone from the hard drive. The records may persist in the binary logs used for database mirroring, or the data may simply persist as junk data inside the database, even after issuing a regular VACUUM.

How far do we want to go here? Do we have to issue a VACUUM FULL (which re-creates the entire database table) to securely delete data? Does that really provide additional guarantees when data may still persist on sectors of hard drives? We don’t know. The GDPR informs us that we have to “erase” data (including copies and replications thereof), but we have no idea what it actually means in the context of software. We think deletion to the point where we cannot recover the data — even if we wanted to — qualifies as real erasure.

I’m sure the fine folks who wrote this regulation thought carefully about what it really means to erase data. They’re just sparing us the technical details so we can experience the joy of figuring it out for ourselves.

Backups

Backups and GDPR are, on a fundamental level, diametrically opposed to each other. With a good backup solution you know that even if disaster strikes no user data is lost and the service can be entirely restored. To comply with GDPR a user has the “right to be forgotten” which means their data cannot be recovered and is lost forever. So how can we satisfy these conflicting goals? Clearly we cannot just destroy our backups, but we can’t keep customer data in perpetuity either.

Our solution: have backups rotate on a strict schedule, and delete old backups, including remote and offsite backups. The only difficulty is that this adds a delay to irrevocable data deletion. If, somehow, data ends up in a backup set that should already have been deleted, then it could take another 6 months after we fix the mistake for the data to be entirely gone from all backups.

There is no perfectly satisfactory solution here. For future products we’ll probably create backups strictly on a per-customer basis. Then we can easily backup/restore an individual customer’s data as needed, and if a customer exercises their GDPR “right to be forgotten” then we can immediately purge all their data from our systems.

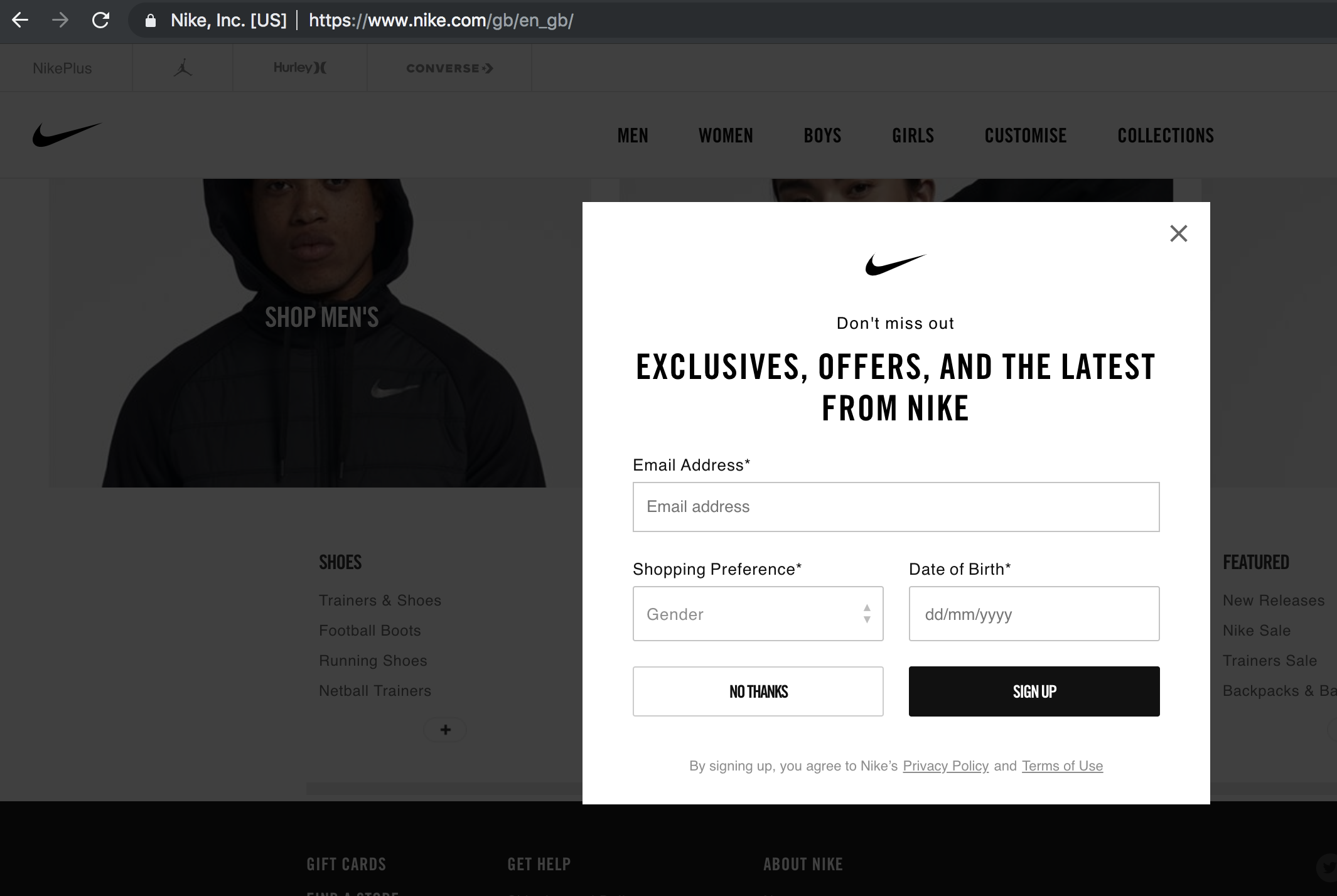

Third party services

We don’t share any customer data with 3rd party services. We don’t share data with advertisers. We don’t use any software for sales lead generation. We like to build everything ourselves, and that served us well so far. The only 3rd party service we use is Pingdom, and they don’t have access to any confidential data of ours.

Data Export

GDPR gives customers the “right to data portability”. We already had a Papyrs Backup system in place, so we didn’t have to do anything here.

PCs, Laptops and Phones

Here we just have to take some common sense precautions. There shouldn’t be any sensitive customer data on our work devices, but old devices get securely wiped just in case. We use full disk encryption on all machines (macOS FileVault and TrueCrypt) and iPhones because they are encrypted. If somehow an old device ends up in the trash we can be confident no data leak will happen as a consequence.

Employee records

We don’t just have GDPR obligations toward our customers, but to employees and contractors as well. They, too, have a right to know what data we have on them, and they have a right to be forgotten after their employment has ended. There’s not much to say here, except that having a central place where all data is stored makes deletion a whole lot easier.

Customer Rights

So far we’ve mostly talked about the technical implications of GDPR. GDPR also specifies that our customers have a number of concrete rights: We have an obligation to tell customers what data we have on them and how we use it. We have an obligation to notify our customers when data leaks occur. Customers have a right to tell us not to process their data.

We can imagine these rights lead to a lot of difficulty for many businesses, but not for us. Thankfully we don’t need to collect any personal data from our customers except for a name and address for billing purposes, so we don’t. Our business model is really straightforward and we don’t need to engage in any shenanigans. Customers are free to come and go as they please, and they can take their data with them.

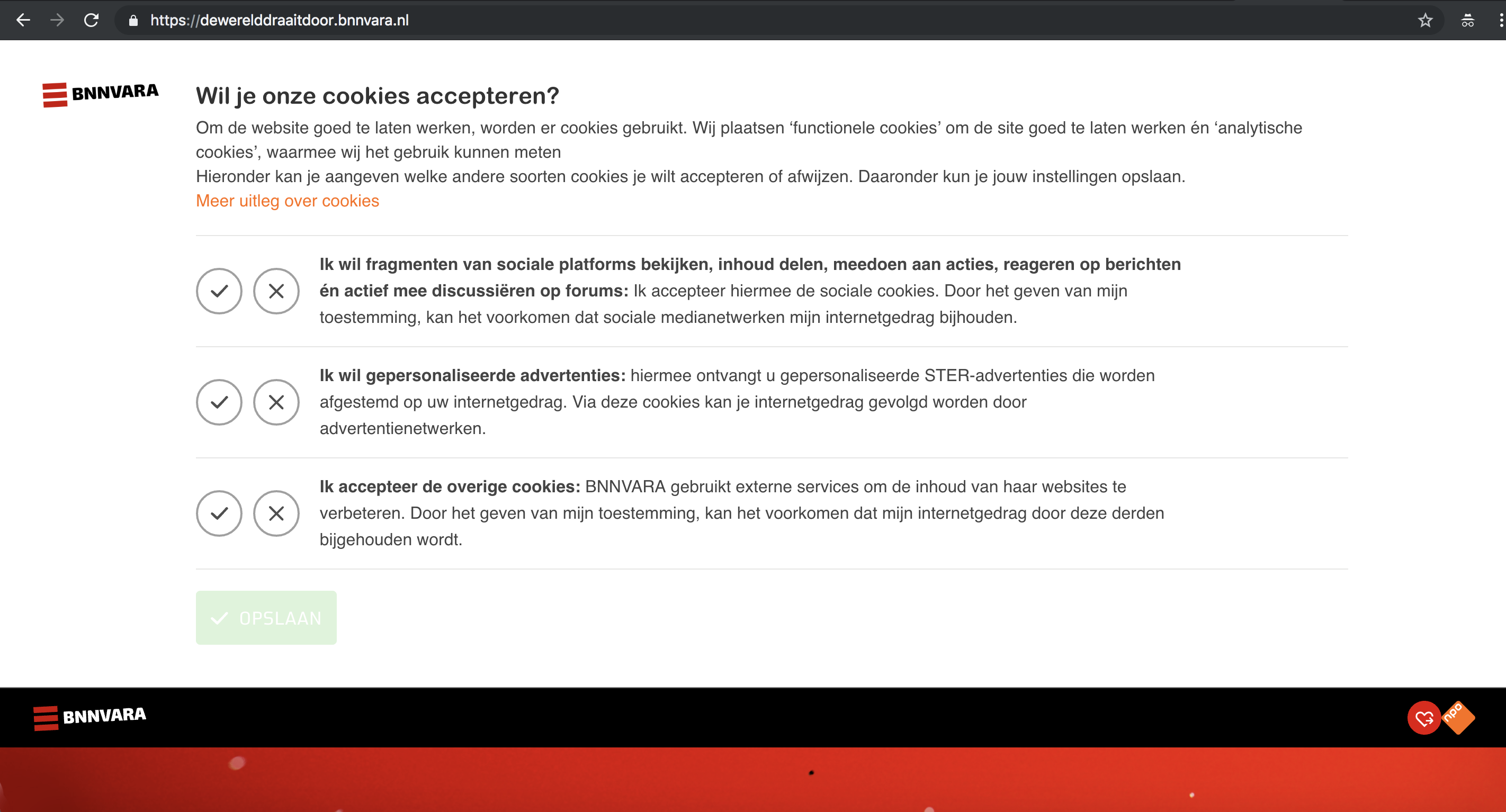

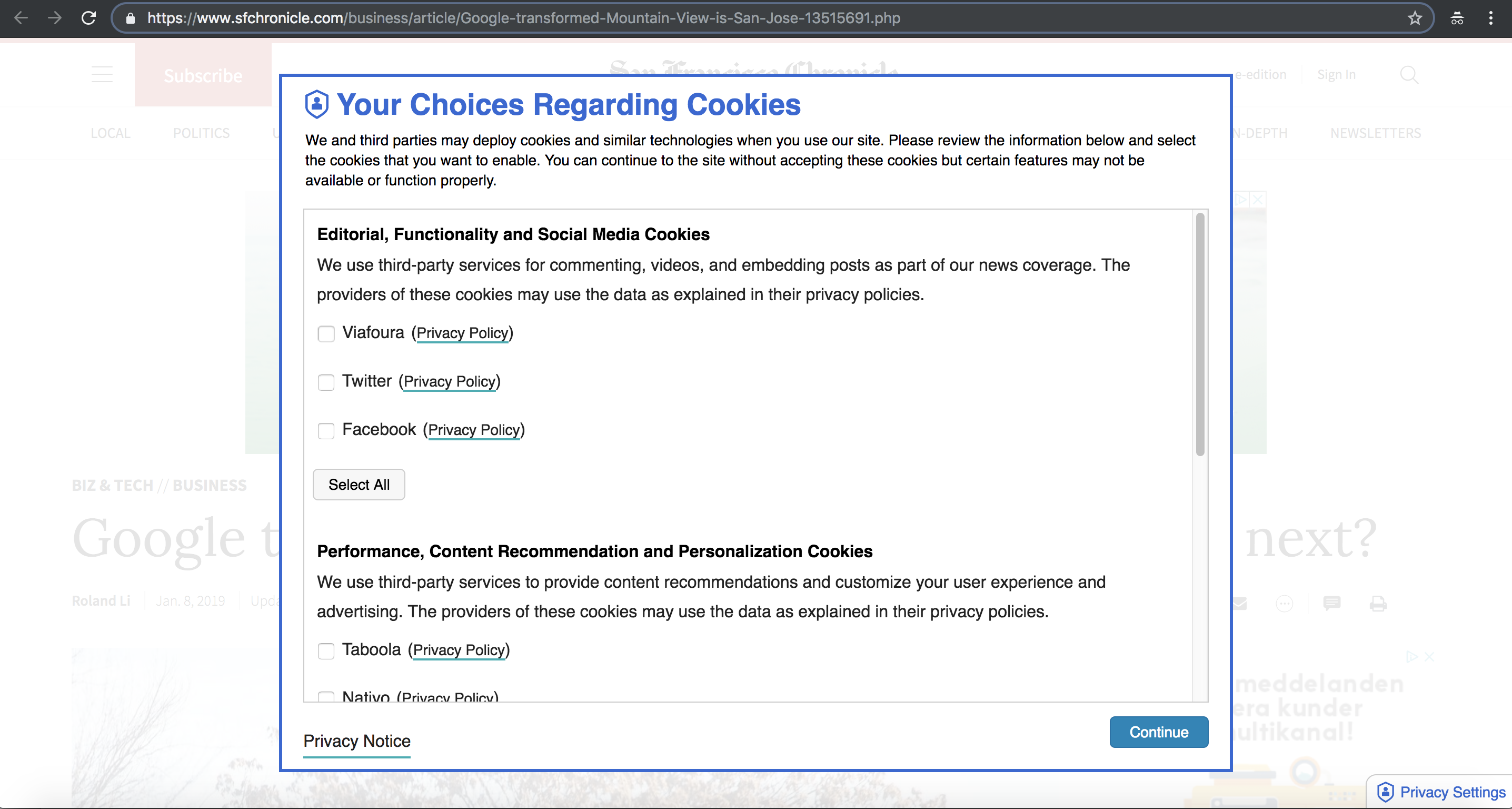

Appreciation of the GDPR landscape

Appreciation of the GDPR landscape

Conclusion

The main thing we learned in the past year while preparing for GDPR is that it’s much easier to design a new service with GDPR in mind than to retrofit GDPR compliance onto systems that were created many years ago. We are really lucky that we already stored 90% of our data in a structured way where every piece of data had a known owner (so we can easily figure out what to delete). However, we had to make sure that all data on our servers was accounted for, not 90%. If you have a pile of mystery data somewhere — even when nobody has access to it — that’s still a GDPR liability. As the saying goes, when you’re 90% done you still have 90% of the work ahead of you.

We hear that companies are only now starting with their GDPR preparations, but time is running out. GDPR compliance isn’t something that can be outsourced or something a small task force can do by itself, because GDPR affects affects customer support, sales, operations, marketing, HR, and every other part of your organization. GDPR affects all data, and data is everywhere.

If your organization has to be GDPR-ready in a few months and you haven’t started yet, I wish you luck :)

Thanks for reading!

Fishermen don’t have to worry about GDPR

Fishermen don’t have to worry about GDPR Appreciation of the GDPR landscape

Appreciation of the GDPR landscape