Intro

People store a lot of documents and other business knowledge on Papyrs and so we wanted to add search functionality so people could get to their documents more quickly. Here we’re going to give the technical explanation of how we got it all to work.

Much to our surprise we couldn’t find any package out there that met our (pretty basic) criteria. We were looking for:

- really fast search (so results can be displayed as you type)

- real-time indexing of new or changed documents (otherwise people who try our product for the first time won’t find the document they just created)

- reliable unicode support (7 bits sure ain’t enough for everybody)

- support for infix searches (important for reasons mentioned later)

- an indexer and searcher that can scale relatively easily to multiple processes or servers when/if the need arises

- stable performance (no segfaults please)

- a search engine that lets us change the schema of the documents we’re indexing without breaking anything.

- easy integration with a Python web app (using Django)

We looked at a number of search engines:

Lucence, Solr, Sphinx and PostgreSQL Full Text Search. We played with all of them but only Sphinx came close to meeting our criterea above. We’re pretty confident, looking back, that we made the right decision.

General introduction to Sphinx

Sphinx has two parts, an indexer and a search daemon. The search daemon listens for search queries such as “alpha & (delta | gamma)” and goes through the indexes for matches. The indexer reads data from a data source (relational database, XML pipe) and indexes it according to the document schema. When indexing has finished, it rotates (swaps) the index currently used by the search daemon with the new one. The old index is then deleted. This means (re)indexing and searching can happen in parrallel, and even on different physical machines if needed.

Implementation

We have different sorts of documents: Pages, Comments, Attached files, Profiles, and filled out Forms. These documents are non-uniform: different sorts of documents have different attributes. So we don’t want to hard-code the structure of the index in sphinx.conf. Instead we’ll use sphinx XML pipe functionality and generate the schema structure and data from the Django Model as needed. So for each Django Model we create a sphinx index. Then when a user searches we do a search for every document type and combine the results and display them to the user.

We connect Sphinx to Python with the Python library sphinxapi.py included in the Sphinx package. It’s a pretty straightforward mapping of API functions to Python methods. You can set the match mode, how the matches are sorted, which indexes to search through and so on. There are also a number of open source libraries that connect Django and Sphinx. We looked at Django-Sphinx but it hasn’t been maintained in the past couple of years and it doesn’t support XML based data sources (which we want to use). It instead generates a sphinx.conf file with the indexes and schema structures in there.

Generating XML data

So let’s illustrate how XML generation works using an example Comment model. We add a Sphinx metaclass for each Django Model we want to index.

The classes Attr and Field are simple wrapper classes that we use to generate the Sphinx schema from. They also make sure that when the XML data is generated that the data is of the correct type. Sphinx has a very fragile XML parser, so we have to make sure that boolean columns only contain boolean values, that everything is escaped properly and so on.

Using the SphinxSchema definition above we can easily generate the XML schema:

So with the combination of schema and a Django QuerySet we can now generate the XML data for sphinx to index. Pseudocode:

This works but we have to optimize: we don’t want to reindex everything when a single record changes. So we use two indexes for every document type: a main index and a delta index. The main index is the large index that contains everything that hasn’t been touched recently and the delta contains those documents that have been recently created, modified or deleted. The delta index is small and can be re-indexed frequently. The easiest way accomplish this is to give every model an “updated_at” timestamp, and every time a record is changed you update the timestamp.

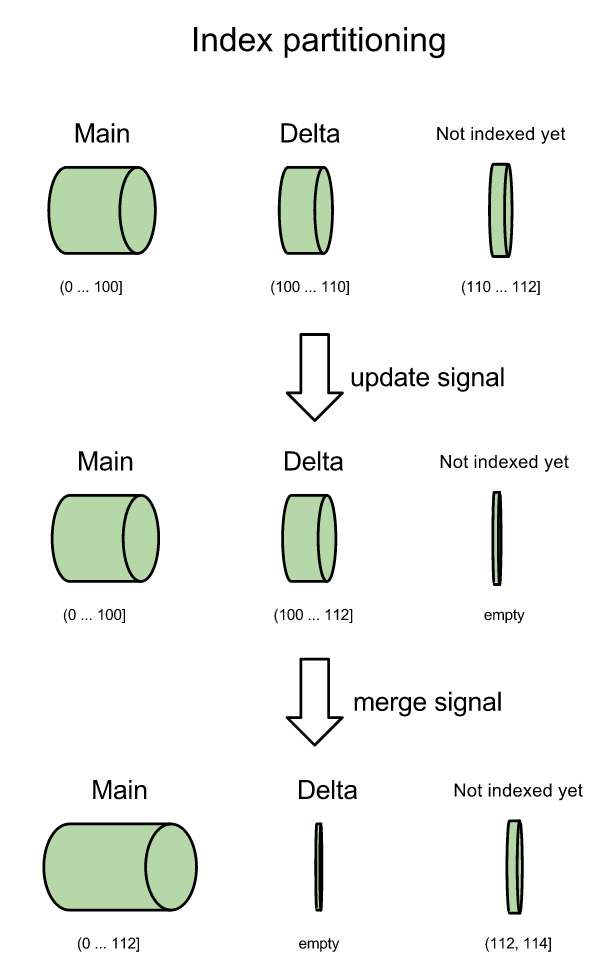

Then you just partition the indexes into parts: the main index contains all records where [0 <= updated_at <= last_merge_time]. The delta contains all records where [last_merge_time < updated_at <= last_delta_time]. More partitions can be added if needed, but two indexes per document type will probably be good enough unless you have a huge database or documents change very frequently. Anyway, every time a user changes a document the indexer starts and re-indexes the all files that have been changed since last_merge_time and updates last_delta_time to the current time (technically, the time when it *started* delta-indexing, because that's when the database transaction starts). See the illustration:

After an update the delta partition is completely re-indexed. Then the delta and main indexes are merged into one. During this time a few new documents arrive and the process starts anew.

So how do we start the indexer from django? Easy, we just touch(1) a file whenever a document is saved. Django has a post_save signal which we use to catch all save events. We check if the model that's being saved has a SphinxRecord metaclass and if so, we wake the indexer. It's the simplest solution we could think of :).

Abbreviated version of the daemon that spawns the indexer (we left out error checking, logging, etc):

It's just busy waiting until a process touches the PID file, then starts the sphinx indexer. Note that because we spawn new processes we can easily change the python code for updating/merging without having to restart this daemon. Also note that when multiple people touch the pid file the indexer is still only started once. And this way we also know for sure that the delta index and merge processes will never run at the same time.

Let's do a quick back of the envelope estimate: Delta indexing typically takes between 2 and 10 seconds, and if we merge least once every 500 delta indexes, then that's 1 merge roughly every hour. We currently index only a couple million documents and the indexes are only a few gigabytes large. Merging a delta and a main index is essentially the merge step of the merge sort algorithm. The two indexes are just interleaved, so the merge step takes roughly the time needed to copy the files. Copying a few gigabytes worth of indexes every hour is absolutely fine from a performance point of view so this straightforward main+delta solution is good enough for our purposes. And yep, in practice the indexer is running pretty much all day and night, because people are adding documents to Papyrs all the time.

Ghosting

Ghosting is when you delete a document but it still shows up in the search results for a while after. Suppose the main index contains document ids {1, 2, 3} and delta is {4, 5}. Then you change the title of document 2 and as a result it goes to the delta index. So main: {1, 2, 3}, delta: {2, 4, 5}. When you search for the document's new title it shows up exactly as expected. Because document 2 has the same primary key in the main and delta index Sphinx knows only to return the result from the delta index, so you don't get duplicate results. Perfect. Now you delete document 2 and you're left with: main: {1, 2, 3}, delta: {4, 5}. And when you search for the old document title it suddenly shows up, because the document is still in the main index. That's called ghosting and we want to keep it from happening.

The solution: we give every document type an attribute is_deleted. We then search with a sphinx filter is_deleted=False. Sphinx doesn't let us change fields (variable length text) but sphinx does allow us to update boolean values, integers and timestamps in a search index. So, whenever a document is modified we set is_deleted=True in the main index and in the delta index. This ensures that the old document doesn't show up in the search results at all anymore. Then, a few seconds later the new delta index will be ready that contains the updated document.

Permissions

With Papyrs different people in a group have different permissions. So we have to make sure that we display documents to a user if and only if the user has sufficient permissions to at least view that document. So after Sphinx comes up with a list of documents that match what the user searched for, we simply filter out those documents that the user can't access.

Indexing attachments

We index inside attachments, such as PDFs, Excel spreadsheets, Word documents and so on. This means we have to extract the text content of these different document formats. For this we just use the packages out there: ps2text for PDF files, antiword for MS Word documents. However, many of these text extraction tools mangle the text somewhat. Newlines and punctuation go missing, lines are concatenated without spaces between them, and garbage characters end up in the middle of words. We clean up the output by simply removing all suspicious looking characters and stripping all HTML tags from it.

If all content is really clean then you rarely have to search for only part of a word. But when some of the content is a bit messy then infix search becomes really valuable. Half the spaces in a document may be missing and you're still going to find matches with perfect accuracy.

Tips

- make sure you bind the search daemon to localhost otherwise everybody can connect to it. If you have a dedicated sphinx server, set up an SSH tunnel (i.e. ssh -f -N remote_server -L[remote_port]:localhost:[local_port]) because sphinx doesn't have any built-in authentication or encryption.

- if sphinx segfaults for unclear reasons it's probably because of the forking model you configured in sphinx.conf.

- we tried Sphinx' alpha real-time index support, but it was still very unstable (segfault gallore) and it doesn't support infix searching. It's in active development though, so that might be much better soon!

- compile Sphinx from source with at least libexpat and iconv support.

Conclusion

We've had this setup in production for almost 3 months now and it all works works great. Searches typically take just a few milliseconds and new results are added to the index within 5 seconds on average. We've spent a lot of time to make sure that search "just works". So we thought we might as well document what decisions we made and why. This is the document I wish existed when I started working on Papyrs search.

Phew, that's it. This turned out a lot longer than I had anticipated, but as I'm proofreading this there isn't much I can leave out. Thanks for reading this far. If you found this interesting, please spread the word or leave a comment!

PS: I could open up the source (it's few hundred lines of Python) and throw it on github. I'd have to spend an afternoon refactoring it though, so let me know if you're interested.

Great write up,

I have couple of questions though:

1. How many records are in the index?

2. How many get updated / added / deleted?

3. Is your indexed distributed or uses only 1 server?

Cheers!

Thanks!

1) a couple of million records in total (couple of GB)

2) varies throughout the day, maybe a couple per second

3) single server (although pretty beefy)

The good thing about the setup as described in the blogpost is that we can easily throw more hardware at the problem when we need it, because different parts of the index can be indexed independently. Searches themselves are read-only, so they scale really easily as well. The most difficult issues are accuracy (no ghosting) and index speed (re-indexing part of the database).

But we probably won’t shard our database or search until we really have to. If 37signals can get away with a single database server (http://37signals.com/svn/posts/3089-three-years-later-mr-moore-is-still-letting-us-punt-on-database-sharding) then we’ll probably be fine with a single search server as well.

Great stuff…very helpful!

Why not use Sphinx’s K-List functionality to deal with ghosting?

Good point. There are a couple of reasons. One reason is that the kill list in Sphinx, as far as I know, works only in combination with an SQL data source, using the

sql_query_killistdata source parameter. We use an XML pipe as data source. Also, the kill list is only checked during indexing: every time sphinx (re)-indexes it will exclude the ids from the index that are returned by the kill list. So after deleting a page but before re-indexing has finished (and the indexes rotate) ghosting problems will still occur. So that’s another downside.More recently Sphinx added real time attribute updates. This allows us to distinguish between deleted and archived documents (just add flags for both), to add flags for permissions and so on. And we can easily undo an archive action: we just toggle the flag back to its original state. With a k-list we can’t do any of this.

@PS: did you “throw it on github” ;] ?

We didn’t. Sorry. So far only 3 people (including you) have shown interest in the complete code.

I`m interested in this code too :)

Wow! Fantastic.

We have also implemented sphinx on our website allevents.in. We are using sphinx for full-text search.

I have one question.

For example there is one table called event and one table called attendees which has a fk to event. Now i have 100 attendees. I want to find out events which are attended by these 100 attendees using sphinx.

How can I do this? Currently we are indexing events only.

Hmm, interesting. Sphinx doesn’t have support for joins or other complex queries, even though Sphinx has a mysql-like query syntax. So in your situation we’d probably fall back on MySQL or PostgreSQL and look for the records that way. A regular “WHERE attendee_id IN (list_of_attendee_ids)” query would do the trick. So you can take results from sphinx, then get some additional records from your database, and then query sphinx some more if you have to. I can’t recommend using Sphinx as a makeshift relational database.

Thanks Diederik.

Hi Diederik,

I don’t see use of last_delta_time. Where did you use that?

The short answer: we don’t really use it.

The somewhat longer answer: it’s just nicer to explicitly keep track of the begin and end points of every partition. That way we can make sure that the partitions don’t overlap and so on, without having edge cases for the first and last partition. Of course, back when I wrote the code I didn’t know if we would need more than 1 delta index (now it looks like we don’t). When you have multiple delta indexes we’d have to keep track of where the first one ends and the second one begins.

We do use it occasionally, now I think of it. “last_delta_time” is the end of delta index. So now() – last_delta_time indicates how much time has passed since the last successful (delta)index/merge. We use this to quickly check if everything is still running smoothly.

Just FYI, I’m pretty sure you could do that kind of filtering (event/attendees) with some MVA trickery (Multi-Value Attributes).

We have a few many-to-many relationships in our indexed data, and use MVAs to filter those “documents” that are in one or more of given “categories” (for example, if we had a N:M relationship between documents and categories)

Hi Diederik,

Thanks for posting this article. I followed it and setup our own sphinx search engine. We have done almost the same but with few minor changes. It would be nice if you can also explain how are you managing deleted/updated records. We have done that using kill-list. But i would be nice if you can share your thoughts on it.

Hi Amit,

Very cool. All our sphinx models have an is_deleted flag. When a record is updated/deleted is_deleted is set to True. When querying sphinx we always filter results on is_deleted=False.

So when items get updated the old data will stay in Main (with is_deleted=True) and it will also be in Delta (with is_deleted=False). Whenever we merge the old data will get expunged from Main.

Using a kill-list is a fine solution too, of course. See my earlier answer to Marc for more of our motivation.

Congrats on getting everything to work!

Hi Diederik,

I have learnt a lot from this post. Our site was running perfectly but since last two days we are seeing abnormal behavior in sphinx. Searchd process stops automatically. I changed Workers = Thread to Workers = Fork and then it is going good. But now sometime it throws error, “Zero-size response received”. I checked this with multiple cases and realized that this happens with complex queries only and with the queries which returns possibly huge data. For ex. if i remove the sorting condition, the query returns the data else error. If i change the limits of records to 100 from 500, the query works fine. Please help me with this, if you had faced and solved this problem already.

Hmm, that looks like an internal sphinx bug. We haven’t seen it ourselves (maybe because we only return the best search results, and not hundreds of matches), so I can’t help you with specifics. You can try upgrading to the latest beta, see if you get different results if you try from the command line, enable warnings and check log files, and so on. Or you can just restrict the number of search results and load more results as the user scrolls down the page (a bit like Google image search).

Hi Diederik,

Great Article…. You really managed the case well.

I have just started today on sphinx. I have to search from different type of documents posted by users from application.

Can you make favor by posting your code on github as we are really interested in your code.

I have to prepare code in php so will use your code for reference only. But this will be great help.

Thanks

Great article!

this is pretty much what I have been looking for. did you try other tools than antiword and ps2text and made a comparison/conclusion to use these two?

I would also be very interested in the python code.

thanks

robin

Glad you like it!

We also use pdftohtml, and we fall back on ps2txt when it fails. Modern Office files (docx, xlsx, pptx) we unzip, strip the XML tags and index the XML text.

could you give some more insights why the other search engines fell short? especially lucene?

thanks

Lucene doesn’t play well with Python. PyLucene embeds a Java process inside a Python process, which isn’t pretty. Also, when you want to move the search daemon and indexer to different servers communication should go over a simple socket. I have no doubt that Lucene is a good product, but it doesn’t match our design/architecture needs.

Nice article

Im new with the sphinx, and ill use the main + delta index, base on what i read, they updating the main index, once a day, while the delta is every 5 minutes.

Base on your experience, how did you implemented the main + delta ?

Im just confused, if the data in the database is updated or deleted which is in the main index of the sphinx, how does my main index will be updated, if its setup to update once a day.

Thank you, hope you get my point, sorry for bad english

Great! I haven’t used Sphinx yet but I am looking forward to work with it soon. Thanks for the info I find sphinx really great!